Abstract

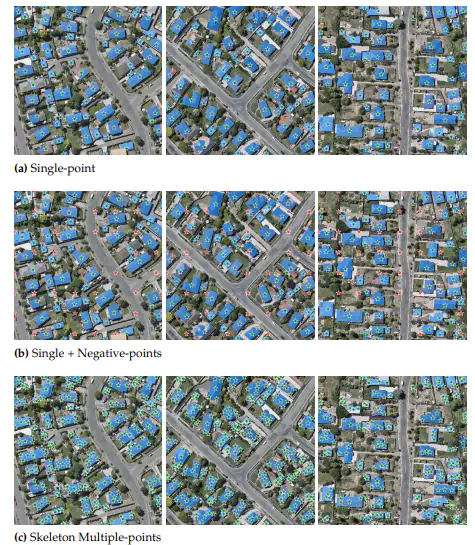

Foundation models have demonstrated unparalleled performance across diverse tasks involving vision, language, and multimodal domains. Notably, visual foundation models have surpassed the capabilities of prior task-specific models in various dense prediction tasks. Yet, these models still target general benchmarks and are evaluated on curated datasets. The practical adaptation of such models to domain-specific tasks remains an area that has received relatively limited attention. The domain of remote sensing imagery holds significant importance due to its critical real-world applications, such as instance segmentation of buildings, which enables precise identification and analysis of buildings’ footprints for various applications, including urban planning and infrastructure development. While Convolutional Neural Networks (CNNs) have demonstrated remarkable capabilities in buildings’ rooftop instance segmentation task and have led to the development of cutting-edge models in this domain, they do have certain limitations. One prominent constraint is the limited generalization, where deploying a highly accurate CNN-based buildings footprint segmentation model on unseen data may lead to reduced performance. One may resort to fine-tuning or retraining to enhance the model’s performance. For this aim, we present a novel approach to adapt foundation models to address existing models’ generalization dropback. Among several models, our focus centers on the Segment Anything Model (SAM), a potent foundation model renowned for its prowess in class-agnostic image segmentation capabilities. We start by identifying the limitations of SAM, revealing its suboptimal performance when applied to remote sensing imagery. Moreover, SAM does not offer recognition abilities and thus fails to classify and tag localized objects. To overcome this, we propose to adapt SAM via prompt engineering. Concretely, our investigation delves into 14 distinct single and composite prompting strategies, encompassing a novel approach that enhances SAM performance by integrating a pre-trained CNN as a prompt generator. To the best of our knowledge, this is the first attempt to augment SAM with a CNN-based prompt generator that offers recognition capabilities. Via a thorough quantitative and qualitative analysis, we evaluate each scenario using three remote sensing datasets: WHU Building Dataset, Massachusetts Buildings Dataset, and AICrowd Mapping Challenge. Our results highlight a substantial enhancement in SAM’s buildings segmentation accuracy. Specifically, for out-of-distribution performance on the WHU dataset, we observed a 5.47% and 4.81% improvement in both IoU and F1-score, respectively. We also witnessed 2.72% and 1.58% enhancement in terms of True-Positive-IoU and True-Positive-F1-score, respectively, for in-distribution performance on the WHU dataset.